One of the major technological developments of the past decade has been the steady rise of cloud development, as more and more companies moved away from the traditional on-premises software. In recent years, the “on-premise vs. cloud” debate has leaned heavily towards the latter. This change was not only impactful in the software engineering industry. It has also evolved the way companies establish their internal processes, regardless of their industry.

HTEC Group has been quick to realize the potential of cloud technology. Our extensive knowledge and experience in this area enable our partners to continue their growth through the digital transformation of their businesses and reliance on leaner and more efficient platforms. Here is one example of utilizing our expertise in enterprise cloud services.

Client requirement

Our partner is a global maritime and land satellite service provider. In order to enable their own clients from specific regions to communicate with local data centers — and thus reduce costs and increase speed, they were looking to implement a global network of regional data centers.

Upon careful examination and consideration, we have decided on Microsoft Azure as the appropriate platform for this objective.

The primary impulse for migrating the previous on-premises setup was the fact that Azure offers global component distribution across a large network of local data centers all around the world. Additionally, the partner wished to minimize the workload of its DevOps team in order to shorten time-to-market. Compared to the existing on-premises solution, the Azure platform requires far less maintenance due to its fully-managed nature.

Furthermore, Azure contains a number of components and services that enable us to improve a solution and make it more robust, faster, more scalable, etc. Thanks to automatic scaling, we are able to resolve performance issues before they reach the end customer, which prevents the challenge and the expense of issues being spotted by the client in production.

Deployment time needed to be significantly decreased due to its potential adverse effects on business processes. With Azure, re-deploying one component does not engage other components, unless they are dependent on it. The fact that the deployment of individual components does not affect the entire environment significantly advances the overall process.

Finally, with manual deployment, the process can be further slowed down with potential human error. Tools such as Azure DevOps enable us to perform automatic and one-click deployments, thus eliminating the risk of mistakes and standardizing the deployment process.

Having to perform deployment manually introduces a risk of mistakes which additionally slows the process down. This is why we use tools like Azure DevOps and perform automatic and one-click deployments. This is how we eliminate human errors and standardize the entire deployment process.

Azure hands-on

Controlling the environment

The existing on-premises solution required the involvement of a multi-person external team, tasked with setup, maintenance, security, and validation of infrastructure. All this combined often resulted in long and slow processes due to protocols. Azure resolves this issue by removing the reliance on external teams — we control the environment and our protocols are followed. This significantly accelerates the process, makes it more efficient, and produces visible results in a short time.

Deployment automation

The choice of Azure enabled us to establish beneficial CI/CD pipelines, which presented another improvement in comparison to the pre-existing environment. Due to its natural integration with Azure, we have chosen Azure DevOps. Setting up a CI/CD pipeline has enabled automated deployments and infrastructure setup. Consequently, DevOps-related work was dramatically decreased, enabling our partners to dedicate more time to product development.

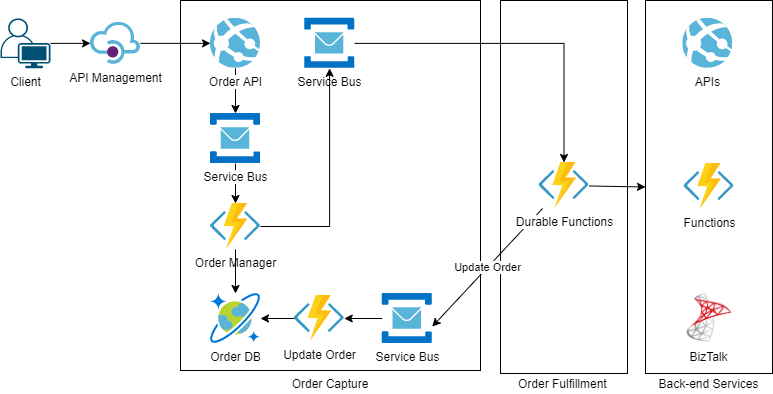

Example of an ordering module

A segment of our solution is based on an ordering module that enables customers to order specific services. It includes a REST API, hosted on Web App Service, which accepts clients’ orders. That order API has its own logic for order enrichment, validations, etc. and it posts messages to the Service Bus — the central messaging mechanism enabling communication between Azure components. The components are decoupled and the design is event-driven. Functions are triggered according to messages sent to Service Bus. Orders are transferred to the Cosmos DB, which is a storage for orders, and then, messages are posted to the fulfillment engine composed of orchestrated business processes implemented using Azure Durable Functions. The Durable Functions are calling external and internal APIs. A small portion of our internal APIs are still hosted on the BizTalk integration platform, which we are planning to migrate to Azure-hosted services. The rest of the APIs are either hosted on Azure or by external companies.

Logic Apps Service is not used as intensively as some other services. It is used for background processes that synchronize data from external systems to our systems because they are long-running and happening in the background. We mainly use Functions since, compared to Logic Apps, they can be developed and tested in a local development environment, they are more maintainable, easier to monitor, etc.

Architecture diagram

Architecture diagram

Tech stack

- App Service

- Functions

- Durable Functions

- Logic Apps

- Storage

- Cosmos DB

- Azure SQL Database

- Table storage

- Blob storage

- Data Lake

- Data Lake analytics

- Analysis services

- Data Factory

- Service Bus

- Queue

- Topic

- Subscriptions

- Filters

- Hybrid Cloud

- WCF Relay

- Hybrid Connections

- Cloud Monitoring with Application Insights

Staying up-to-date

Due to the recent popularity of Azure, Microsoft is quick to deliver updates and fix bugs, but not as quick with documentation updates. Nowadays, any conferences and industry events dedicated to Microsoft are bound to cover Azure, which helps us keep up with the latest developments. These industry events also provide us with an opportunity to frequently speak to Microsoft VIPs. Additionally, our partner has an active cooperation with them, as well as Microsoft partner companies, which also helps us get timely information, expand our knowledge, and adjust our approach.

What’s next

Future steps for the project include leaving BizTalk on-premises and performing the entire implementation through Azure components. This is how we will avoid the downtime affecting every app. The migration of the entire infrastructure to the cloud is also in progress. Intelligent services such as Bot Framework, LUIS, and various other data processing and analysis services are also in the scope of our interest. HTEC’s BI experts already use Analytic Services for data analysis and predictions, Power BI for reporting, and Data Lake for big data storage, but full usage is in the works. Furthermore, SQL Server Integration Services (SSIS) will be replaced by Data Factory in data migration and transfer.

The benefits of cloud for business process

Cloud platforms are very much in vogue, and a growing number of large enterprise systems have either already made their migration to cloud or are giving it serious consideration. Our experiences on partner projects have revealed a number of arguments in favor of cloud-based architecture:

- It is a popular area supported by cutting-edge solutions and technologies emerging on a daily basis

- It decreases time-to-market

- It provides the systems with a greater degree of stability and robustness

- Data and components can be stored on globally distributed data centers, which provides high availability, durability and redundancy

- Pricing models are adjusted to each component

- Costs are influenced positively

The wave of the cloud has gained unstoppable momentum, and catching it early and watching it transform multiple industries has been and continues to be a fascinating ride. If you’re curious to discover how cloud technology can power further growth of your business, HTEC is a proven cloud technology partner that can provide the vision, the know-how, and the hands-on experience that ensures an efficient and impactful digital transformation.