It only takes a second: A message on your phone or a child distracting you in the backseat, and, before you know it, your car crashes into the back of the vehicle that suddenly stops in front of you.

According to WHO (World Health Organization), traffic crashes cause approximately 1.3 million deaths worldwide each year, and human errors cause 95% of the cases.

While fully self-driving cars are still a distant future, the automotive industry has been investing billions in automation systems over the past decade.

Automotive safety features such as adaptive cruise control or blind-spot monitoring are becoming standard as ADAS technology becomes more popular within various car models. In this post, we will discuss the various technologies that are reimagining the driving experience.

What is ADAS technology?

Advanced driver-assistance systems (ADAS) are electronic systems in a vehicle that use advanced technologies to assist the driver, including:

- Pedestrian detection/avoidance

- Lane departure warning/correction

- Traffic sign recognition

- Automatic emergency braking

- Blindspot detection

- Auto park/Smart park

- Driver monitoring systems and occupancy monitoring systems (DMS and OMS)

Powered mostly by cameras, LiDAR (light detection and ranging), radar, and infrared sensors, ADAS technology is becoming more available in vehicles to improve safety and comfort. The automotive industry is also investing heavily to reach Level 4 and Level 5 of autonomous driving. Considering we are still far from seeing fully autonomous vehicles on the roads, current ADAS technology features focus primarily on active safety (accident prediction and prevention) and assistance systems.

Start-ups and established OEMs have invested $106 billion in autonomous-driving capabilities since 2010, most of which has gone toward enhancing ADAS technology, which speaks volumes about how impactful this technology is.

According to a National Safety Council report published in 2019, ADAS technology can prevent 62% of total traffic deaths, primarily thanks to pedestrian automatic braking features and lane-keeping. ADAS features also have the potential to prevent or mitigate about 60% of total traffic injuries.

Now, let’s explore three ADAS technology features that are making a difference in the automotive industry.

Driver monitoring and occupancy monitoring

One of the major causes of traffic accidents today is drowsy and distracted driving, e.g., paying too much attention to a mobile device or other passengers.

Sophisticated driver monitoring can help prevent traffic accidents by alerting the driver when the system detects the individual is distracted or falling asleep at the wheel.

Driving monitoring systems will soon be a crucial feature for OEMs to get the highest safety rating from the European New Car Assessment Programme (Euro NCAP), a voluntary car safety performance assessment program.

A high rating from this organization is a well-established mark of quality and safety, encouraging car manufacturers to adapt their new car models to the safety standards set by the Euro NCAP organization. This safety rating aims to reduce driver errors caused by distraction, drowsiness, or other abnormal vital conditions.

How driver monitoring works

Driver monitoring technology is complex and requires years of experience and R&D in different domains, including deep learning, image processing, computer vision, camera technologies, and embedded device design and development.

What is DMS?

The idea behind a driving monitoring system (DMS) is to accurately detect the driver’s face and eyes with an interior camera, track them, and monitor the driver’s state. This should help eliminate one of the leading causes of accidents by identifying all types of distracted driving (i.e., visual, manual, cognitive). Any distraction, drowsiness, or sleep/microsleep states are immediately detected, and the driver can take appropriate action before an accident happens.

In perfect conditions, all these unwanted behaviors can be easily detected. Still, in real life, different complex scenarios can, and will, happen, e.g., lighting conditions can reduce face visibility, or the driver might be wearing sunglasses, so eyes are blocked.

Advanced near-infrared (NIR) cameras, specially designed for driver state monitoring, must be used to overcome all these issues. They capture the high-quality images required for the driver’s facial features and head tracking, while NIR image sensors minimize the influence of environmental lighting on face images. This technology enables complete visibility of the driver’s face during night driving or any other difficult lighting conditions.

Additionally, DMS powered by AI can efficiently detect if the driver eats, drinks, smokes, uses the phone while driving, or does not have a seat belt fastened.

What is OMS?

Occupancy monitoring systems (OMS) are a relatively new technology compared to DMS. It is a system that collects information from all passengers in the vehicle to increase their safety and security, as well as ambient personalization (e.g., biometrics/health, forgotten child, intrusion detection).

Together with DMS, OMS provides complete cabin monitoring, called interior sensing, and offers additional safety levels for all car passengers. Interior sensing can monitor the state of all passengers as well as pets. OMS detects all occupants and enables automatic management of safety features such as enabling child lock if a child is present.

Considering that the basic functionalities of DMS and OMS technology are already present in some premium cars, we can expect even more sophisticated DMS systems that can detect the driver’s emotions or even decide if the driver is incapable of driving.

For example, ADAS technology can detect if the driver is drunk by looking for pupil dilation and monitoring their behavior during the drive. Also, it will be possible to automatically manage interior features of the vehicle (e.g., volume moderation, lighting, and temperature settings) by identifying and interpreting the emotions of all passengers on board.

Thanks to Euro NCAP, DMS, and OMS technology will practically be a requirement for any new car launched on the European market. This means DMS and OMS are priorities worldwide for many car manufacturers, AI companies, and camera manufacturers.

A vision-based system

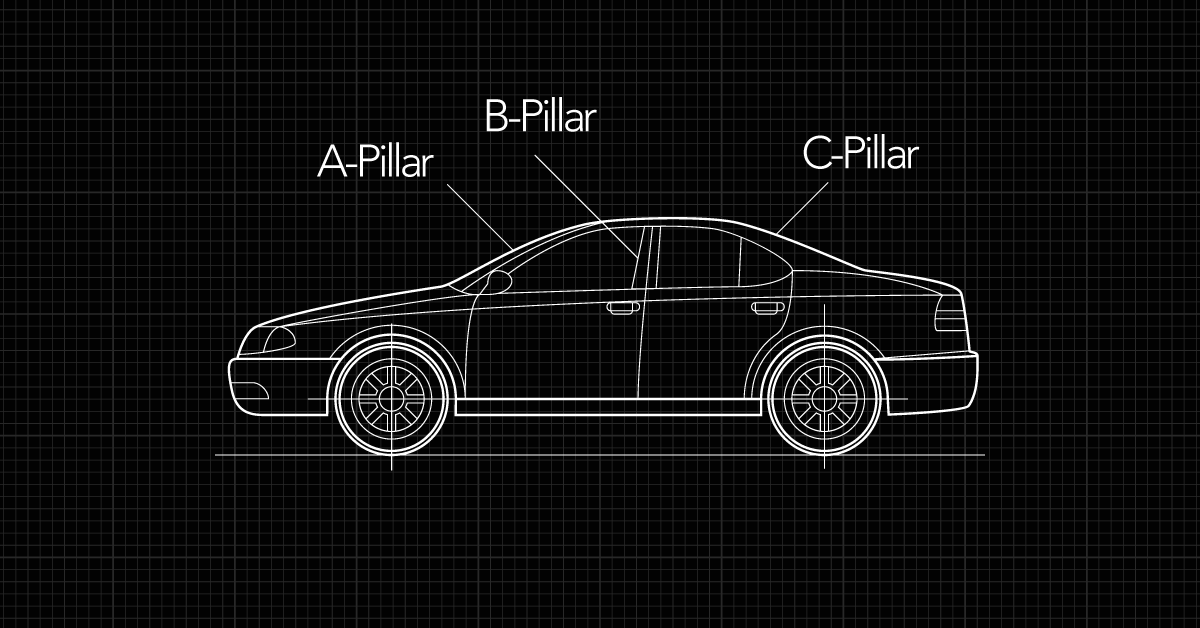

With modern cars, there is another blind spot that most people do not even know exists. It’s called an A-pillar blind spot.

Pillars are the parts of a car that connect the roof to the body. The A-pillars are on both sides of the front windshield, the B-pillars are behind the front doors, and the C-pillars are on both sides of the rear window. As engineers have designed cars to better protect passengers, the A-pillars have grown much wider. Also, there is room for an airbag in the A-pillar, significantly affecting its size.

Therefore, these design alterations can result in a serious reduction in visibility. The problem usually manifests itself on the driver’s side of the car, often when making a left turn. The A-pillar blind spot can block a driver’s view of a pedestrian or a cyclist.

Currently, there are no off-the-shelf solutions for this problem on the market, but with the tremendous progress of ADAS technology, we expect to overcome this problem soon.

A practical solution will be a vision-based system with a wide-angle front camera and flexible display embedded in the A-pillar. A video stream from the camera in front of the A-pillar will be projected on the display in front of the A-pillar. Additionally, a DMS camera will be used to detect the position and movement of the driver’s head, so a real-time video stream on the A-pillar display can be adjusted to the driver’s point of view.

Considering all new cars will have front and interior cameras installed, this solution is practical and easy to achieve.

Automatic emergency braking

As we already described, DMS technology can detect driver distractions and alert the driver within seconds. However, emergency braking or obstacle avoidance should be conducted only in critical situations. Drivers can produce these urgent situations even if they are not distracted, e.g., a vehicle that unexpectedly stops in front of you.

To equip a car with such advanced systems, it is necessary to use data fusion from sensors such as radars, LiDARS, and different cameras. A high-frequency radar sensor is mandatory to detect the presence of an obstacle and calculate the distance from it.

On the other hand, to distinguish shapes and colors, and quickly identify the type of objects, we can use high-resolution cameras. The radar helps detect any obstacle on the road, but the camera is required to determine the type of the obstacle (e.g., pedestrian or another vehicle). Hence, the fusion of data from multiple sensors is mandatory to get reliable ADAS applications.

Reliability is critical for these systems. They should work properly in any conditions—day or night, rain or fog, etc. However, considering all sensors have some limitations, we recommend using as many different sensors as possible to overcome these limitations.

For example, foggy or rainy weather reduces visibility, meaning that conventional cameras or LiDARS are unreliable. Unlike cameras and LiDAR, radars are not affected by weather, so they can detect almost any obstacle during bad weather. However, a shorter radar wavelength does not allow the detection of small objects. So, to detect a small animal on the road during terrible weather conditions, we can introduce additional sensors like thermal cameras, which are extremely valuable to driver-assisted systems.

Going beyond the wheel

The global ADAS market size is projected to grow to USD $83 billion by 2030, and demand for advanced assistance systems will grow rapidly in the next 10 years.

There are a few reasons for this:

- Car manufacturers want a good safety reputation

- ADAS technology can significantly raise the vehicle price

- Some activities will be regulated by government laws soon, e.g., driver monitoring.

These trends — advances in autonomous technology, the shift from a hardware- to a software-defined vehicle, and ever-changing customer expectations — are raising fundamental questions about the purpose of a vehicle.

On the one hand, driver assistance technologies create challenges for traditional automakers. But they also present a world of exciting new opportunities.

The automotive industry is playing a key role in this new digital era. So, companies must get creative about reshaping their products, embrace change, and collaborate beyond industry lines to find new ways to innovate.

Want to learn more about how HTEC’s technology expertise can transform your business? Explore our Technical Strategy and Automotive capabilities.